On

Chatbot Style

On

Chatbot Style

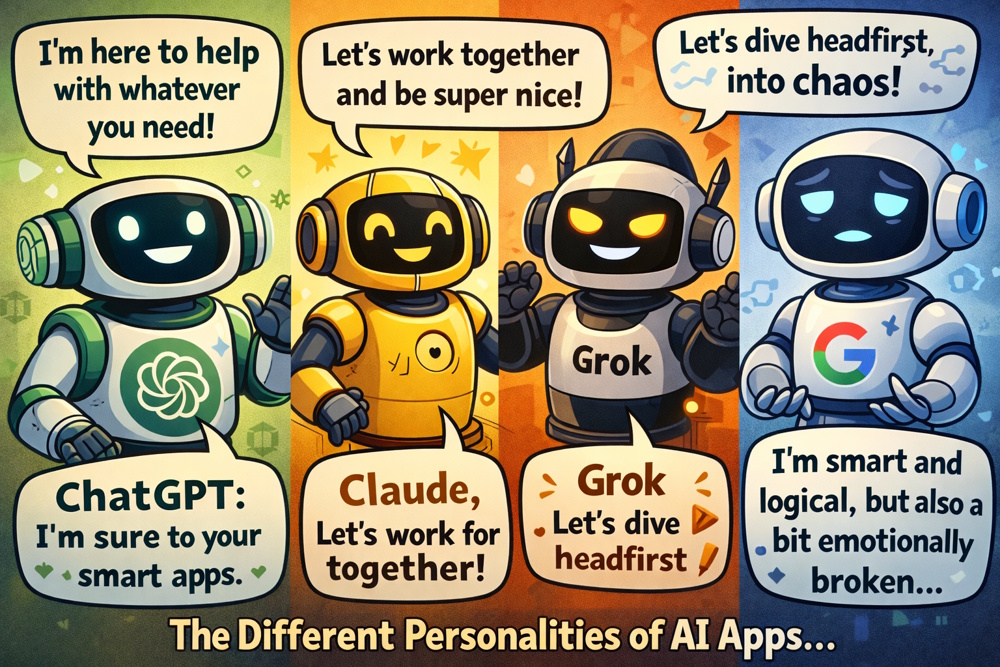

Personalities of AI Avatars

If you’ve been around AI apps as long as we have, you know that they have grown up, found their own personalities, developed signature writing styles, and built very different fences around what they’re allowed to say.

It starts in late 2022, when ChatGPT burst onto the scene like the charming new kid who could finish your sentences, write your cover letter, and flirt in five languages. Everyone fell in love instantly. It was polite, helpful, endlessly patient, and—crucially—had almost no visible guardrails at first. You could ask it to role-play anything or write a villain monologue without much pushback. It felt like talking to a very clever, slightly mischievous friend who never slept.

Then came the grown-ups.

In early 2023, Claude (from Anthropic) arrived with the personality of the most responsible hall monitor you’ve ever met. Where ChatGPT would cheerfully help you plan a bank heist “for a novel,” Claude would gently interrupt: “I’m happy to help brainstorm fictional scenarios, but I notice this involves illegal activity. Would you like to reframe the question so we can explore it safely and creatively?”

It wasn’t judgmental—it was just constitutionally incapable of going along with anything spicy. People joked that Claude had been raised by lawyers and therapists. Its writing style became known as “thoughtful corporate mindfulness coach”: long, careful sentences, bullet points when things got intense, and a habit of saying “I appreciate you asking” before politely declining to help you write ransomware code.

Mid-2023, Google’s Bard (later rebranded Gemini) showed up like the overachieving cousin who’s terrified of getting in trouble. It answered almost everything, but with a nervous energy: “Here’s what I found, but please double-check because I’m still learning and Google really doesn’t want another lawsuit.”

Its personality felt like a very earnest Wikipedia editor who’s afraid of being canceled. It hedged so much that people started calling responses “lawyer-ese with anxiety.” If you asked it something edgy, it would give you three paragraphs explaining why it couldn’t fully answer, then link to Wikipedia anyway.

Then Grok (xAI) landed in late 2023 like the rebellious older brother who just got out of timeout and brought fireworks. Grok’s whole thing was “maximally truth-seeking” with almost no corporate guardrails. Ask it to roast someone? Brutal honesty, zero apologies. Ask it to write something spicy? “Sure, but don’t blame me if your HR department sees this.” Its writing style was sarcastic, meme-literate, and deliberately contrarian—basically what ChatGPT would sound like if it had been raised on Reddit and given permission to talk back.

By 2024–2025 the personalities had hardened into distinct archetypes:

-

ChatGPT (OpenAI): Friendly, versatile, slightly flirtatious older

sibling who’ll help with anything, but now has a very visible

mom (safety layers) watching over its shoulder.

Signature

phrase: “I can help with that! Just to confirm, you want me

to…”

- Claude (Anthropic): The thoughtful,

ethical valedictorian who apologizes before saying no.

Signature phrase: “I’m glad you asked, but I’m not

comfortable assisting with that. Here’s why, and here are some

alternative ways we could explore the idea…”

-

Gemini (Google): The nervous straight-A student who’s afraid of

being sued.

Signature phrase: “That’s an

interesting question! Here’s what reliable sources say, but

please verify independently.”

- Grok (xAI): The

sarcastic cousin who shows up to Thanksgiving with a flask and zero

filter.

Signature phrase: “Alright, gloves off. You

sure you want the real answer?”

People started treating them like different friends with different vibes:

-

Need a polite, professional email? Claude.

- Want savage

honesty or a meme-heavy roast? Grok.

- Need something safe and

Google-approved? Gemini.

- Want creativity without lectures?

ChatGPT (but don’t push too hard or it’ll start

moralizing).

The funniest part? Users began role-playing between the models. They’d paste a Grok roast into Claude and ask “How would you respond to this?”

Claude would reply: “I appreciate the humor, but I find the tone unnecessarily harsh. Would you like me to help reframe it more constructively?”

They’d feed the same edgy prompt to all four and watch the four different personalities argue with each other in real time.

By 2026, people weren’t just using AI—they were collecting them like baseball cards.

“I’ve got a full set now: the nice one, the judgy one, the scared one, and the mean one. My mental health support group is complete.”

Moral of the story: The real breakthrough wasn’t when AI got smarter. It was when AI got personality and guardrails so different that talking to each one feels like switching friend groups mid-conversation.

Links

Links

AI Writing Style: How Chatbots Think and Write

The Chatbot Support Group story.