AI Act

AI Act

European Union legislative framework for regulating AI

TL;DR

The EU Artificial Intelligence Act (AI Act) is a pioneering legislative framework developed by the European Union to regulate AI across its member states. The AI Act aims to provide a comprehensive approach to balancing innovation and ethical considerations in AI deployment. It's goal is to foster a responsible and transparent AI ecosystem, while at the same time safeguarding fundamental human rights and public safety.

The AI Act has a phased implementation timeline. The full implementation of the AI Act will occur in 2026. This phase will see the application of rules governing high-risk AI systems, which are listed in Annex I of the regulation. The European Commission is actively working with standardization bodies to develop harmonized standards that will detail the requirements for these AI systems.

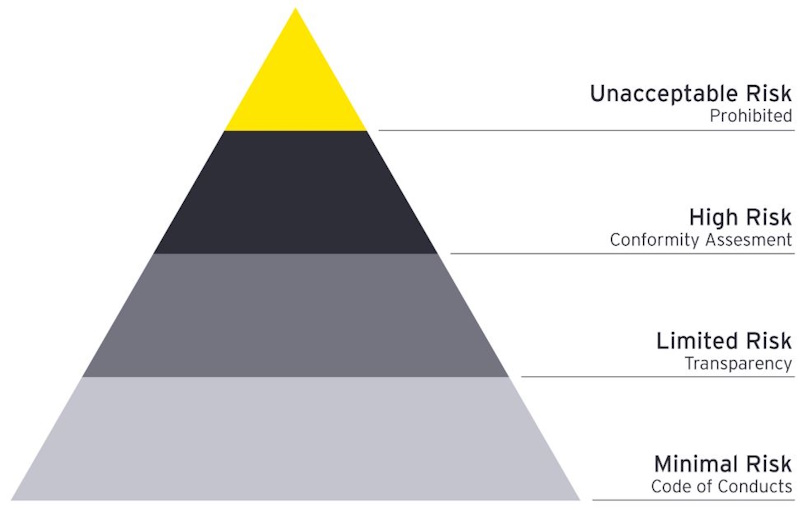

Central to the AI Act is its risk-based classification system, which categorizes AI applications into four levels: unacceptable, high, limited, and minimal risk. This structure allows for differentiated regulatory requirements, based on risk, and imposes strict obligations on high-risk systems. Applications that threaten safety and fundamental human rights, such as social scoring and real-time biometric surveillance, are prohibited.

The AI Act emphasizes transparency and accountability, mandating that high-risk AI systems provide clear information on their functionality, decision-making processes, and potential biases. The purpose of these controls is to address ethical concerns associated with AI deployment. Overall, the EU AI Act aims to position the European Union as a leader in ethical AI development, ensuring that advancements in technology align with societal values. As the landscape of AI continues to evolve, the Act seeks to establish a regulatory standard that could inspire similar frameworks worldwide, on a model of accountability and fairness in AI governance.

What is it?

The AI Act is the European Union’s landmark law designed to regulate artificial intelligence. It’s the world’s first comprehensive legal framework specifically for AI, aiming to ensure that AI systems used in the EU are safe, transparent, and respect fundamental rights. Key points of the AI Act:

-

Risk-Based Approach: AI systems are categorized by risk level—from minimal to unacceptable. The higher the risk, the stricter the rules.

-

Banned Practices: Certain AI uses are outright banned, such as social scoring by governments and manipulative AI that exploits vulnerabilities.

-

High-Risk AI: Systems used in critical areas (like healthcare, law enforcement, or employment) face strict requirements for transparency, data quality, and human oversight.

-

Transparency: AI systems that interact with people (like chatbots) must disclose that they are AI, not human.

-

Fines: Companies violating the rules can face fines up to 6% of global revenue or €30 million, whichever is higher.

The AI Act was officially adopted in 2024 and is being rolled out in stages, with most provisions expected to be fully enforced by 2026.

The EU AI Act is being rolled out in stages, with different provisions taking effect at different times. Here’s a concise breakdown of the key milestones and timelines:

AI Act Implementation Timeline

Entry into force on June 2024, when the AI Act became official EU law.

-

Banned AI Systems (February 2025): Prohibitions on unacceptable AI (e.g., social scoring, manipulative AI).

-

General AI Rules (August 2025): Most obligations for AI providers and users start to apply.

-

High-Risk AI (August 2026): Full compliance required for high-risk AI systems, e.g., healthcare, law enforcement.

-

Enforcement (2026 onwards): National authorities begin active monitoring and enforcement.

Banned AI practices

Under the EU AI Act, certain AI practices are completely banned because they are considered to pose unacceptable risks to people’s safety, rights, and democratic values. These prohibitions came into effect in February 2025. Here’s what’s banned:

-

Social Scoring by Governments: AI systems that evaluate or classify people based on behavior, socio-economic status, or personal characteristics, leading to detrimental or unfavorable treatment.

-

Manipulative AI: AI that exploits vulnerabilities (due to age, disability, or social/economic situation) to distort behavior, causing physical or psychological harm.

-

Biometric Categorization: Using AI to categorize people based on sensitive characteristics (e.g., race, political opinions, trade union membership, religious beliefs, sexual orientation) in public spaces.

-

Predictive Policing (with risks): AI systems that predict criminal behavior based solely on profiling or location, if they risk discriminatory outcomes.

-

Emotion Recognition in Work/School: Using AI to infer emotions in workplaces or educational institutions, except for medical or safety reasons.

-

Untargeted Scraping of Faces: Collecting facial images from the internet or CCTV to create facial recognition databases without consent.

Why Are These Banned?

The EU considers these practices to be fundamentally harmful to human dignity, privacy, and equality. The bans are designed to prevent mass surveillance, discrimination, and manipulation.

General AI Rules

The General AI Rules under the EU AI Act, which began applying in August 2025, set out broad obligations for most AI systems not classified as high-risk or banned. These rules aim to ensure transparency, accountability, and safety across the AI ecosystem. Here’s what you need to know:

Transparency Requirements

AI Disclosure: Users must be clearly informed when they are interacting with an AI system (e.g., chatbots, deepfakes, or AI-generated content).

Watermarking: AI-generated content (text, images, audio, video) must be labeled as such, especially if it could be mistaken for human-created content.

Risk Management & Documentation

Technical Documentation: AI providers must keep records of their system’s design, training data, and intended use.

User Information: Providers must supply clear instructions for use, including the system’s capabilities and limitations.

Data Governance

Data Quality: AI systems must be trained on high-quality, representative datasets to minimize bias and errors.

Copyright Compliance: AI developers must respect EU copyright laws when using data for training.

Human-in-the-Loop: For AI systems that make significant decisions (e.g., hiring, lending), there must be mechanisms for human review or intervention.

Compliance for General-Purpose AI Foundation Models: Developers of large, general-purpose AI models (like those powering chatbots) must assess and mitigate risks, and report serious incidents to authorities.

Who Do These Rules Apply To?

AI Providers (companies or individuals placing AI systems on the EU market)

AI Users (organizations deploying AI in the EU)

Importers & Distributors of AI systems

Penalties for Non-Compliance: Fines up to €15 million or 3% of global annual turnover (whichever is higher) for violations of general obligations.

How the EU AI Act Affects U.S. Companies

For U.S. companies operating in the EU or offering AI products/services to EU customers, the EU AI Act has significant implications. Here’s what U.S. companies need to know:

Scope: Who is Impacted?

Any U.S. company that places AI systems on the EU market or whose AI outputs are used in the EU—regardless of where the company is based.

Examples: U.S. tech giants, AI startups, cloud providers, and any business using AI in customer-facing or internal EU operations.

Compliance with Risk Categories

Banned AI: Must not develop or deploy banned AI practices (e.g., social scoring, manipulative AI).

High-Risk AI: If your AI is used in critical sectors (healthcare, law enforcement, employment, etc.), you must meet strict requirements for risk assessment, data quality, and human oversight.

General AI: Must follow transparency rules (e.g., labeling AI-generated content, disclosing AI interactions).

Documentation & Reporting: Maintain technical documentation, log datasets, and report serious incidents to EU authorities.

Representation in the EU: Appoint an authorized representative in the EU to act as a liaison with regulators.

Enforcement & Penalties

Fines: Up to €35 million or 7% of global annual turnover (whichever is higher) for non-compliance with banned AI; up to €15 million or 3% for other violations.

Market Access: Non-compliant AI systems can be banned from the EU market.

Practical Steps for U.S. Companies

Audit AI Systems: Classify your AI products according to the EU’s risk categories.

Update Data Practices: Ensure datasets are unbiased, representative, and legally sourced.

Implement Transparency: Add clear disclosures for AI interactions and generated content.

Monitor Regulatory Updates: The EU is still finalizing some guidelines, especially for general-purpose AI.

Why Comply?

The EU is a major market—non-compliance could mean losing access to hundreds of millions of customers.

The AI Act is becoming a global standard, with other countries (e.g., Canada, Brazil) considering similar laws.

Stay tuned, some aspects are still being finalized...

The EU AI Act is a groundbreaking and highly complex regulation, which is why some guidelines and implementation details are still being finalized—even after the law’s entry into force. Here’s why:

Technical Complexity

AI is rapidly evolving: The EU wants to ensure guidelines keep pace with new technologies (like generative AI, foundation models, and edge AI) that didn’t exist or weren’t widespread when the Act was drafted.

Risk classification: Defining what counts as “high-risk” AI in practice requires detailed technical standards, which are being developed with input from experts and industry.

Need for Clarity & Consistency

Avoiding ambiguity: The EU wants to prevent legal loopholes or inconsistent enforcement across member states. This means creating clear, actionable guidelines for businesses and regulators.

Harmonized standards: The EU is working with standardization bodies (like CEN-CENELEC) to create technical standards that align with the Act’s requirements.

Stakeholder Feedback

Public consultation: The EU is gathering input from businesses, civil society, and technical experts to ensure the rules are practical and effective.

Pilot programs: Some guidelines are being tested in real-world scenarios before finalization.

Phased Rollout: The AI Act is being implemented in stages (see previous messages). Some rules (like those for general-purpose AI) are still being refined to ensure they are enforceable and proportionate.

Global Coordination: The EU is also considering how its rules interact with other jurisdictions (like the U.S. AI Executive Order or UK AI regulations), which can influence the final shape of the guidelines.

What’s Still Being Finalized?

General-purpose AI (GPAI) rules: Guidelines for foundation models (like those powering chatbots) are expected in 2026.

High-risk AI assessment: Detailed methodologies for risk assessment and compliance.

Enforcement mechanisms: How national authorities will monitor and penalize non-compliance.

In short, the EU is taking a cautious, iterative approach to ensure the AI Act works in practice—not just on paper.

Why Me?!

Why Me?!

In 2023, the European Union was drafting its landmark AI Act, a sweeping set of regulations to govern artificial intelligence. As lawmakers debated how to classify different AI systems, someone realized a hilarious oversight: the EU’s own AI-powered chatbot, designed to help citizens understand the new AI rules, was itself not fully compliant with the draft law.

The chatbot, meant to explain the AI Act, was built using technology that might fall under the “high-risk” category—meaning it could be subject to strict transparency and accountability requirements. But the chatbot’s own documentation was vague, and it wasn’t clear if it met all the proposed standards. So, in a classic bureaucratic twist, the EU’s AI chatbot might have needed to be retooled or even taken offline to comply with the very law it was explaining!

The irony wasn’t lost on tech commentators, who joked that the EU had created a self-regulating AI paradox. The story became a favorite anecdote among AI researchers and policymakers, highlighting the challenges of regulating fast-moving technology.

Structure of the AI Act

Structure of the AI Act

The AI Act has a broad scope and impacts stakeholders in the EU as well as non-EU entities who operate in the EU. It applies not only to providers of AI systems but also to operators, distributors, importers, and deployers of these systems. This wide-reaching application means that companies across different industries, including those not primarily focused on technology, must adapt to the AI Act's requirements. The AI Act specifies compliance requiements for each category of stakeholder.

Risk Classification Framework

The AI Act introduces a risk-based classification system that categorizes AI systems into four distinct risk levels. This classification dictates the regulatory obligations associated with each category.

Unacceptable Risk

AI systems that pose a significant threat to fundamental human rights and safety are prohibited. Some examples are social scoring systems, real-time biometric identification for surveillance in public spaces, and manipulative AI practices.

High Risk

These systems are classified based on their intended use and the existing regulatory framework. High-risk AI systems are subject to stringent requirements aimed at ensuring their safe and ethical deployment, and must comply with stringent requirements, including risk analysis, transparency, documentation, and human oversight. These requirements include conducting impact assessments, maintaining transparency, and implementing monitoring mechanisms. The AI Act mandates that users of high-risk systems provide detailed documentation and conduct data protection impact assessments (DPIAs) to mitigate potential risks associated with processing personal data. Furthermore, the establishment of regulatory sandboxes allows for real-world testing, enabling small and medium-sized enterprises (SMEs) to innovate while adhering to these strict guidelines.

- High-risk AI systems are identified based on their potential to significantly impact health, safety, or fundamental human rights and include applications in critical infrastructure, healthcare, employment, and law enforcement. These systems must adhere to specific guidelines to ensure compliance before these systems are placed on the market or put into service within the EU.

- High-risk AI systems must disclose their purpose, functionality, and decision-making processes.

- Comprehensive technical documentation must be prepared before a high-risk AI system can be deployed. To ensure compliance with the requirements set out in the AI Act, the documentation should detail the system's design, operational controls, performance metrics, and risk management strategies.

- Providers of high-risk AI systems are required to maintain a documented quality management system and retain comprehensive documentation for ten years after the system's introduction to the EU market. This includes technical documentation and evidence of compliance with the AI Act's provisions. The documentation must be readily available to competent authorities upon request.

- High-risk AI systems are subject to ongoing evaluation throughout their life cycle. This evaluation includes assessing their performance to ensure that they remain compliant with safety and transparency requirements. Systems that continue to learn post-deployment must be designed to minimize biases in their outputs and ensure that feedback loops are appropriately managed.

- Providers of high-risk AI systems must also register their systems in an EU database prior to market entry and appoint an authorized representative within the EU if established outside of it. This representative is responsible for ensuring compliance with the AI Act and maintaining communication with EU authorities.

Here are some examples of high-risk sectors:

Here are some examples of high-risk sectors:

- Infrastructure: AI systems used as safety components in the management and operation of essential public infrastructure e.g. water, gas and electricity supplies.

- Education: AI systems used to determinate access to education institutions or in assessing students e.g. AI systems used to grade exams.

- Human Resources: AI systems used in recruitment and employment e.g. for placing job advertisements, scoring candidates or reviewing job applications, promotion or termination decisions or in reviewing work.

- Security: AI systems used in migration, asylum and border control management or in various other law enforcement and judicial contexts.

- Politics: AI systems used for influencing the outcome of democratic processes or the voting behaviour of voters.

- Finance: AI systems used in the insurance and banking sectors.

Limited Risk

Limited risk AI systems, such as chatbots, face minimal obligations, primarily focused on transparency.

Minimal Risk

Minimal risk systems have negligible regulatory requirements, since they are largely governed by other applicable EU and national laws.

Transparency Obligations

The AI Act places a heavy emphasis on transparency, as outlined in the Transparency and Information Provision. This requirement aims to foster trust and accountability in AI governance, enabling users to make informed decisions regarding these technologies. Providers must offer transparent information to deployers. The information includes the system's characteristics, capabilities, limitations, and instructions for use. The information must be clear and accessible to ensure proper interpretation and application of the system's output.

Data Governance

The training, validation, and testing data used must be managed appropriately, taking into account biases that could affect individuals' health and safety or violate human rights. Providers must implement measures to detect, prevent, and mitigate such biases.

Human Oversight

Human oversight is a critical component of governance, especially high-risk AI systems. These systems must be designed to allow effective human supervision, which is necessary to minimize risks associated with their operation. This includes ensuring that operators can understand the system's capacities, monitor its functioning, and intervene when necessary; built-in measures that provide human operators with the ability to monitor and interrupt the system's operation; and clear protocols for overriding the system's output when deemed inappropriate or harmful.

Ethical Considerations

The AI Act emphasizes the importance of ethical AI, asserting that AI development should align with societal values and foster trust among users. This involves addressing biases that may arise from unrepresentative datasets and ensuring that AI systems do not perpetuate harm to underrepresented groups. By requiring developers to conduct impact assessments that focus on marginalized populations, the EU aims to promote fairness and equality in AI technologies. Education and collaboration among policymakers, developers, and affected communities are also crucial for advancing these goals.

Global Influence

As the EU AI Act sets a precedent for global AI regulation, it is anticipated that it will influence other jurisdictions in their regulatory approaches. The growing number of countries adopting AI-related laws demonstrates a growing trend in multilateral coordination on AI governance. The Act's emphasis on transparency, accountability, and user rights seeks to establish a standard for ethical AI practices, potentially reshaping the landscape of global AI development and deployment.

Compliance and

Enforcement

Compliance and

Enforcement

In accordance with the provisions outlined in the AI Act, Member States are required to establish rules regarding penalties and enforcement measures applicable to infringements. These measures may include warnings and non-monetary actions, and must be implemented effectively, aligning with the guidelines issued by the Commission under Article 96.

The penalties enforced are required to be effective, proportionate, and dissuasive. The guidelines also take into consideration the interests of small and medium-sized enterprises and start-ups to ensure their economic viability. Member States must keep the Commission informed about the established rules on penalties as well as any subsequent amendments.

Administrative Fines

The AI Act stipulates significant administrative fines for non-compliance. Specifically, violations of the prohibition against certain AI practices as outlined in Article 5 may incur fines of up to 35 million Euros or up to 7% of their total worldwide annual revenue from the previous financial year, whichever is greater. Furthermore, non-compliance with additional provisions related to operators or notified bodies, not covered under Article 5, may result in fines of up to 15 million Euros or 3% of total worldwide annual revenue. For cases involving the supply of incorrect or misleading information to notified bodies or national authorities, fines can reach 7.5 million Euros or 1% of total worldwide annual revenue, depending on which amount is higher. The fines may be adjusted for SMEs to ensure they do not exceed the specified percentages or amounts.

Compliance Procedures

Market surveillance authorities play a crucial role in evaluating compliance with the AI Act. If an authority believes that an AI system classified as non-high-risk by a provider may actually be high-risk, it can re-assess the classification and enforce compliance with the Regulation. Should the authority determine that the AI system is indeed high-risk, it must require the provider to promptly take necessary corrective actions.

Comparisons with Other Regulations

Comparisons with Other Regulations

The EU AI Act interacts significantly with other regulatory frameworks, particularly the General Data Protection Regulation (GDPR). Both regulations possess an extraterritorial scope, which extends their application beyond the borders of the EU to include any entity that processes personal data or develops AI systems affecting individuals within the EU, regardless of the company's location. This ensures that the rights of individuals are protected, even against non-EU businesses.

Both the GDPR and the AI Act employ a risk-based approach to regulation. Under GDPR, data controllers and processors must evaluate risks to individuals' privacy. The AI Act extends this concept by mandating a systematic risk classification of AI systems, categorizing them as unacceptable, high, limited, or minimal risk, each carrying specific compliance obligations. This structured approach emphasizes the importance of understanding and managing risks associated with both personal data processing and AI technologies.

The enforcement mechanisms outlined in both regulations highlight the seriousness with which the EU approaches compliance. For instance, the AI Act stipulates that non-compliance with certain provisions can lead to hefty administrative fines. The financial penalty mirrors the GDPR's strict compliance requirements, which also includes significant fines for violations, thereby ensuring that organizations prioritize adherence to both frameworks.

Challenges

of the AI Act

Challenges

of the AI Act

The AI Act has generated spirited debate, particularly regarding its implications for innovation and global competitiveness. Critics argue that stringent regulations could stifle technological advancement, while proponents assert that ethical governance is essential for building public trust in AI technologies. Also, the AI Act's extraterritorial application means that non-EU entities must also comply if they operate within the EU market. This aspect raises questions about the global impact of the AI Act and its potential to influence AI legislation in other jurisdictions.

As the AI regulatory landscape evolves, European technology companies are likely to exert pressure for more flexible regulations that broaden the definition of risk. This dialogue may extend beyond the EU, with other countries observing the EU's approach to inform their own regulatory strategies. There will likely be a period of adjustment, during which the pace of innovation could slow down, resulting in what has been termed an "innovation winter" as companies navigate the complexities of compliance with the new standards and the tooling required to AI data and models.

Despite their complementary roles, there are notable gaps and overlaps between the EU AI Act and GDPR, which can create challenges for organizations aiming to comply with both regulations. The legal terminology and assessment methods differ, requiring further guidance to streamline compliance efforts. Cooperation between authorities overseeing these regulations are suggested as a means to mitigate inconsistencies in enforcement and interpretation.

The AI Act explicitly references GDPR principles, emphasizing that they must be integrated into AI systems, particularly in relation to the processing of personal data for training purposes. This intersection raises important questions about the respective roles of data protection authorities and those governing AI compliance.

Future Developments

Future Developments

Initially proposed by the European Commission in April 2021, the AI Act has undergone significant revisions following the emergence of generative AI technologies like ChatGPT in December 2022. This prompted adjustments to the draft text to incorporate specific regulations for generative AI. The European Parliament's Committee on the Internal Market and Consumer Protection has adopted the revised AI Act.

The AI Act is designed to complement the General Data Protection Regulation

(GDPR) rather than replace it, establishing conditions for the development

and deployment of trustworthy AI systems. This alignment is essential for

ensuring that the AI Act enhances the regulatory framework surrounding data

protection while addressing the unique challenges posed by AI technologies.

Organizations are encouraged to stay tuned as the regulatory environment continues to shift. With an ongoing review process in place, the European Commission is expected to refine its approach in response to market and technological developments in an attempt to balance innovation with regulatory needs. This adaptability will be crucial for companies aiming to succeed under the new regulatory framework.

Links

Links

Related: Governance | AI Ethics | Bias | Data Privacy

External links open in a new tab:

- artificialintelligenceact.eu/high-level-summary

- artificialintelligenceact.eu

- europarl.europa.eu/thinktank/en/document

- deloitte.com/nl/en/services/risk-advisory/analysis/eu-ai-act

- kpmg.com/xx/en/our-insights/eu-tax/decoding-the-eu-artificial-intelligence-act

- ey.com/en_ch/insights/forensic-integrity-services/the-eu-ai-act-what-it-means-for-your-business

- simmons-simmons.com/en/publications/clyimpowh000ouxgkw1oidakk/the-eu-ai-act-a-quick-guide

- digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai