Bias in AI

Bias in AI

A condition where AI systems produce unfair or prejudiced results

Bias in AI can be defined as the presence of errors in data or algorithms that can lead to unfair outcomes, often reflecting societal biases present in the training data.

AI can be biased because the data it uses isn't representative of populations, so its recommendations cannot be applied to the groups that are excluded, and where the data collection process is biased, leading AI to make biased conclusions. Some people hesitate to use AI tools because they can sometimes produce biased information.

The bias occurs when the training data is flawed. AI learns from data that may contain human biases or when the data is incomplete. It can happen when developers program their own biases into AI systems during the machine learning process. It can happen If the data used to train AI doesn't reflect the correct population, leading to skewed results. Finally, it can happen when AI perpetuates existing societal biases present in historical data.

Examples of AI bias include facial recognition systems performing poorly for certain ethnicities, job recruitment tools favoring a certain race or healthcare algorithms disadvantaging a particular class of patients.

Bias in AI can lead to discrimination, reinforce societal inequalities, and produce inaccurate results. It's an ongoing problem that requires diverse data sets, careful algorithm design, and continuous monitoring.

Real-World Examples

Real-World Examples

There are sadly many examples of bias in AI. Here are a few examples:

In 2019, researchers found that an algorithm used in US hospitals to predict which patients will require additional medical care favored white patients over black patients. Because the expense of healthcare emphasizes an individual's healthcare needs, the algorithm considered the patients' past healthcare expenditures.

According to a 2015 study, only 11 percent of the individuals who appeared in a Google pictures search for the term "CEO" were women. Another study revealed that Google's online advertising system displayed high-paying positions to males much more often than women.

Research shows that some self-driving vehicles are worse at detecting pedestrians with dark skin, putting their lives at risk.

Amazon's experimental recruiting tool utilized AI to assign job applicants ratings ranging from one to five stars, similar to how customers evaluate goods on Amazon. The business discovered its new system was not evaluating applicants for technical positions in a gender-neutral manner because it was biased towards women.

Janet Hill, wife of Apple co-founder Steve Wozniak, was given a credit limit only amounting to 10 percent of her husband's even though it's inappropriate and potentially criminal to judge creditworthiness on gender.

Mortgage approval algorithms have been found to be 40-80% more likely to deny borrowers of color because historical lending data disproportionately shows minorities being denied loans.

Intel classroom software has a feature that monitors students' faces to detect emotions while learning. Some said that different cultural norms of expressing emotion as a high probability of students' emotions being mislabeled.

The COMPAS algorithm used in US court systems to predict the likelihood that a defendant would become a recidivist. Due to the data that was used, the model that was chosen, and the process of creating the algorithm overall, the model predicted twice as many false positives for recidivism for black offenders (45%) than white offenders (23%).

"Biases have a tendency to stay embedded because recognizing them, and taking steps to address them, requires a deep mastery of data-science techniques, as well as a more meta-understanding of existing social forces, including data collection. In all, debiasing is proving to be among the most daunting obstacles, and certainly the most socially fraught, to date."

Fixing It

Fixing It

In addition to being biased, AI can spread misinformation, and generative AI tools can produce incorrect information. Here are a number of ways to eliminate bias in AI:

- Human oversight: People can monitor outputs, analyze data, and make corrections when bias is displayed. For example, marketers can pay special attention to generative AI outputs before using them in marketing materials to ensure they are fair.

- Assess the potential for bias: Some use cases for AI have a higher potential for being prejudiced and harmful to specific communities. In this case, people can take the time to assess the likelihood of their AI producing biased results, like banking institutions using historically prejudiced data.

- Investing in AI ethics: One of the most important ways to reduce AI bias is for there to be continued investment into AI research and AI ethics, so people can devise concrete strategies to reduce it.

- Diversifying AI: Having diverse perspectives in AI helps create unbiased practices as people bring their own lived experiences. A diverse and representative field brings more opportunities for people to recognize the potential for bias and deal with it before harm is caused.

- Acknowledge human bias: All humans have the potential for bias, whether from a difference in lived experience or confirmation bias during research. People using AI can acknowledge their biases to ensure their AI isn't biased, like researchers making sure their sample sizes are representative.

- Being transparent: Transparency is always important, especially with new technologies. People can build trust and understanding with AI by simply making it known when they use AI, like adding a note below an AI-generated news article.

- Examine the context: Some industries and use cases are more prone to AI bias and have a previous record of relying on biased systems. Being aware of where AI has struggled in the past can help companies improve fairness, building on industry experience.

- Control your data: Establish a data governance framework and document company-wide data management practices that can help reduce bias. Utilize data consultants to help you with the initiative, and pay attention to the data you acquire through a third party.

- Train AI models on complete and representative data: Establish procedures and guidelines on how to collect, sample, and preprocess training data. Involve internal or external teams to spot discriminatory correlations and potential sources of AI bias in the training datasets.

- Perform targeted testing: While testing your models, examine AI's performance across different subgroups to uncover problems that can be masked by aggregate metrics. Perform a set of stress tests to check how the model performs on complex cases. Continuously retest the models as you gain more real-life data and get feedback from users.

- Hone human decisions: AI can help reveal inaccuracies present in human decision-making. If AI models trained on recent human decisions or behavior show bias, be ready to improve human-driven processes in the future.

- Improve AI explainability: Understanding how AI generates predictions and what features of the data it uses to make decisions. Pinpointing the factors that contribute to AI bias can help in identifying and mitigating prejudice.

|

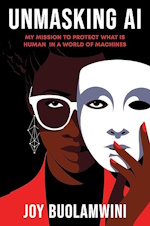

Unmasking AI: My Mission to Protect What Is Human in a World of Machines Unmasking AI goes beyond the headlines about existential risks produced by Big Tech. It is the remarkable story of how the author uncovered what she calls "the coded gaze", the evidence of encoded discrimination and exclusion in tech products, and how she galvanized the movement to prevent AI harms by founding the Algorithmic Justice League. Applying an intersectional lens to both the tech industry and the research sector, she shows how racism, sexism, colorism, and ableism can overlap and render broad swaths of humanity "excoded" and therefore vulnerable in a world rapidly adopting AI tools. Computers, she reminds us, are reflections of both the aspirations and the limitations of the people who create them. |

Links

Links

levity.ai/blog/ai-bias-how-to-avoid

blog.hubspot.com/marketing/ai-bias

itrexgroup.com/blog/ai-bias-definition-types-examples-debiasing-strategies/

datatron.com/real-life-examples-of-discriminating-artificial-intelligence/

pixelplex.io/blog/ai-bias-examples/

chapman.edu/ai/bias-in-ai.aspx

ibm.com/think/topics/shedding-light-on-ai-bias-with-real-world-examples