The Beginnings of AI

The Beginnings of AI

Decades: 1950s - 1960s - 1970s - 1980s - 1990s - 2000s - 2010s - 2020s

Related: Brief History of AI | AI Philosophers | Biographies

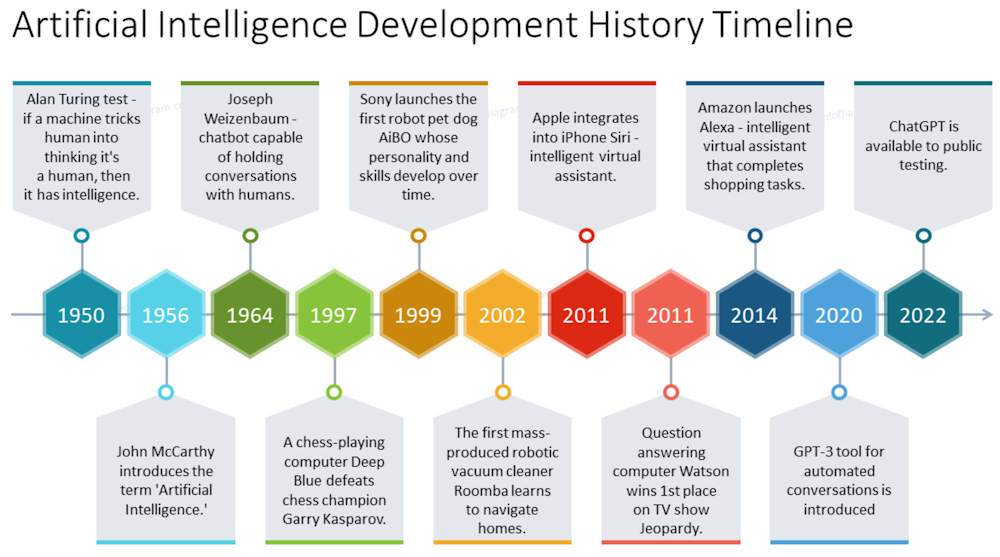

In 1950 Alan Turing, English mathematician and computer science pioneer, posed a simple yet profound question; "can machines think?" In his paper, "Computing Machinery and Intelligence" Turing laid out what has become known as the Turing Test, or imitation game, to determine whether a machine is capable of thinking.

The test was based on an adaptation of a Victorian-style game that involved the seclusion of a man and a woman from an interrogator, who must guess which is which. In Turing's version, the computer program replaced one of the participants, and the questioner had to determine which was the computer and which was the human. If the interrogator was unable to tell the difference between the machine and the human, the computer would be considered to be thinking, or to possess "artificial intelligence."

|

Nexus: A Brief History of Information Networks from the Stone Age to AI

Nexus looks through the long lens of human history to consider how the flow of information has shaped us, and our world. Taking us from the Stone Age, through the canonization of the Bible, early modern witch-hunts, Stalinism, Nazism, and the resurgence of populism today, the author asks us to consider the complex relationship between information and truth, bureaucracy and mythology, wisdom and power. |

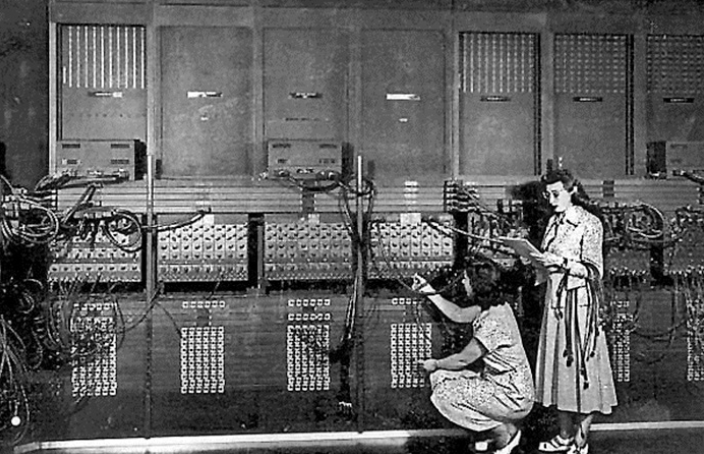

Turing's test came soon after the development of the first digital computers and before the term "artificial intelligence" had even been used

At the time, there were various names for the field of "thinking machines," including cybernetics and automata theory. In 1956, two years after the death of Turing, John McCarthy, a professor at Dartmouth College, organized a summer workshop to clarify and develop ideas about thinking machines. He chose the name "artificial intelligence" for the project. The Dartmouth conference, widely considered to be the founding moment of AI as a field of research, aimed to find "how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans and improve themselves."

AI Reasearch Before 1950

Before 1950, the foundations of AI were laid through philosophical works that explored the concepts of computation, logic, and machine intelligence

Here are some key developments and research papers and the people that contributed to the beginnings of AI:

Alan Turing

"On Computable Numbers, with an Application to the Entscheidungsproblem" (1936):

In this seminal paper, Turing introduced the concept of a Turing machine, a

theoretical construct that formalizes the notion of computation. This work laid

the groundwork for modern computer science and influenced later discussions on

machine intelligence.

"Computing Machinery and Intelligence" (1950): Turing posed the question, "Can machines think?" and introduced the famous Turing Test as a criterion for determining whether a machine exhibits intelligent behavior indistinguishable from that of a human. This paper is often regarded as one of the cornerstones of AI philosophy.

Norbert Wiener

Cybernetics (1948): Wiener's work on cybernetics explored control systems in

animals and machines, emphasizing feedback loops and communication in complex

systems. This concept influenced early AI research by framing machines as

systems capable of learning and adapting.

Warren McCulloch and Walter Pitts

"A Logical Calculus Immanent in Nervous Activity" (1943): This paper introduced

a model of artificial neurons and proposed that networks of these neurons could

perform logical operations. Their work is considered foundational for neural

network theory and inspired later developments in AI.

Claude Shannon

"A Mathematical Theory of Communication" (1948): Shannon's work on information

theory provided essential insights into how information can be encoded,

transmitted, and decoded. This theory is crucial for understanding data

processing in AI systems.

Edmund Berkeley

"Giant Brains: Or Machines That Think" (1949): In this book, Berkeley speculated

about the capabilities of machines to process information similarly to human

brains. He argued that machines could perform logical reasoning and

decision-making tasks.

John von Neumann

His contributions to game theory and automata theory during this period helped

shape early thoughts on strategic decision-making processes in machines.

Vannevar Bush

"As We May Think" (1945): Although not strictly about AI, Bush's essay

envisioned a future where machines could augment human thought processes through

advanced information retrieval systems, which foreshadowed concepts used in AI

development.

AI Reasearch from 1950 to 1960

Between 1950 and 1960, several foundational research papers and developments shaped AI

The decade from 1950 to 1960 was important in establishing AI as a distinct field of study. Key figures like Alan Turing, John McCarthy, Herbert Simon, and others made contributions that shaped subsequent research directions in AI. The concepts developed during this period laid the groundwork for many modern AI applications and theories, influencing how machines are designed to think and learn.

Here's an overview of key contributions during this period:

Alan Turing

"Computing Machinery and Intelligence" (1950): In this influential paper, Turing

introduced the concept of the Turing Test as a way to determine if a machine can

exhibit intelligent behavior indistinguishable from that of a human. This work

laid the groundwork for future discussions on machine intelligence.

Claude Shannon

"Programming a Computer for Playing Chess" (1950): This paper is one of the

first to discuss the development of a computer program capable of playing chess,

highlighting early efforts in creating intelligent systems that could perform

complex tasks.

Marvin Minsky and Dean Edmonds

SNARC (Stochastic Neural Analog Reinforcement Calculator) (1951): Minsky and

Edmonds built this early artificial neural network, which simulated a network of

neurons using vacuum tubes. It was one of the first attempts to create a machine

that could learn from its environment.

Arthur Samuel

Checkers-Playing Program (1952): Samuel developed one of the first self-learning

programs, which played checkers. His work introduced concepts related to machine

learning, demonstrating that computers could improve their performance through

experience.

|

|

John McCarthy |

| Dartmouth Proposal (1955): McCarthy, along with Marvin Minsky, Nathaniel Rochester, and Claude Shannon, proposed a two-month study on artificial intelligence at Dartmouth College. This proposal is often credited with coining the term "artificial intelligence" and is considered the official birth of AI as a field. |

LISP Programming Language (1958): McCarthy developed LISP, which became the dominant programming language for AI research due to its excellent support for symbolic reasoning and manipulation.

"Programs with Common Sense" (1959): McCarthy's paper

adderessed the development of

programs capable of reasoning about everyday situations using formal logic.

Logic Theorist

(1955)

Developed by

Herbert Simon and

Allen Newell, this program was designed to mimic

human problem-solving skills by proving mathematical theorems from

the philosopher Bertrand Russell's

Principia

Mathematica. It was one of the first programs to demonstrate automated

reasoning.

Frank Rosenblatt

Perceptron (1957): Rosenblatt introduced the Perceptron, an early model of a

neural network that could perform pattern recognition tasks. This work laid the

foundation for later developments in neural networks and deep learning.

Arthur Samuel

In 1959, he coined the term "machine learning" while discussing how computers

could learn to play checkers better than their programmers through experience.

Oliver Selfridge

"Pandemonium: A paradigm for learning" (1959): In this paper presented at the

Symposium on Mechanization of Thought Processes, Selfridge described a model for

pattern recognition in machines that laid groundwork for later AI developments.

AI Reasearch from 1960 to 1970

Between 1960 and 1970, AI experienced important developments, marked by pioneering research papers and projects that laid the groundwork for future advancements

The decade from 1960 to 1970 was pivotal for artificial intelligence, characterized by both groundbreaking achievements and significant challenges. Research during this period laid important theoretical foundations and introduced key concepts that would influence future AI developments. Despite facing setbacks like the criticisms highlighted in Minsky and Papert's work on perceptrons, the innovations from this era set the stage for subsequent advancements in AI technologies, including expert systems and natural language processing that would emerge in later decades.

Here's an overview of key AI research papers, projects, and milestones from this decade:

"Man-Computer

Symbiosis" by J.C.R. Licklider (1960)

This influential paper proposed the idea of a collaborative relationship between

humans and computers, emphasizing how computers could augment human intelligence

rather than merely perform tasks.

SAINT (Symbolic Automatic INTegrator) by James Slagle (1961)

SAINT was one of the first programs capable of performing symbolic integration

in calculus, showcasing early efforts in applying AI to mathematical

problem-solving.

General

Problem Solver (GPS) by Herbert Simon, Allen Newell, and J.C. Shaw

(1961)

GPS was an early AI program designed to solve problems through heuristic search

methods. It demonstrated that machines could mimic human problem-solving

strategies, contributing to the development of symbolic reasoning in AI.

STUDENT by Daniel Bobrow (1964)

This program was designed for natural language understanding and could solve

algebra word problems presented in English. It represented significant progress

in integrating language processing with problem-solving capabilities.

DENDRAL

by Edward Feigenbaum et al. (1965)

DENDRAL was an early expert system developed for chemical analysis, specifically

to interpret mass spectrometry data. It demonstrated the application of AI in

scientific reasoning and knowledge-based systems.

Resolution Principle by Alan Robinson (1965)

Philosopher-mathematician Alan Robinson created the "resolution principle"

that let programs work efficiently with formal logic as a representation

language in solving mathematical proofs. Robinson also created the complete

algorithm for logical reasoning, allowing computers to solve equations and

test arguments with certain mathematical symbols.

ELIZA

by Joseph Weizenbaum (1966)

ELIZA was one of the first natural language processing programs, simulating a

conversation with a psychotherapist. It showcased the potential for machines to

understand and generate human-like text, sparking interest in human-computer

interaction.

The ALPAC Report (1966)

The Automatic Language Processing Advisory Committee published a report

criticizing the state of machine translation research, leading to decreased

funding and interest in natural language processing for several years.

"Perceptrons"

by Marvin Minsky and Seymour Papert (1969)

This book critically analyzed the limitations of single-layer perceptrons, a

type of artificial neural network. It highlighted the challenges of using simple

neural networks for complex pattern recognition tasks. The publication is often

cited as a catalyst for the first "AI winter," a period characterized by reduced

funding and interest in AI research.

"Some

Philosophical Problems from the Standpoint of Artificial Intelligence"

by John McCarthy and Patrick J. Hayes (1969)

This paper discussed foundational issues in AI related to representation and

reasoning, including what would later be known as the frame problem.

Semantic Networks

During this period, researchers like Ross Quillian developed semantic networks

as a way to represent knowledge in AI systems, allowing for more complex

relationships between concepts to be modeled.

AI Reasearch from 1970 to 1980

From 1970 to 1980, AI witnessed significant advancements, marked by the development of new theories, systems, and applications

The 1970s were characterized by both significant achievements and challenges in artificial intelligence research. While advancements like MYCIN and XCON showcased practical applications of AI, critical assessments such as the Lighthill Report led to reduced funding and interest in the field, resulting in an "AI winter." Nevertheless, foundational work during this decade laid important groundwork for future developments in expert systems, natural language processing, and robotics that would emerge in subsequent years.

Here's an overview of key research papers and milestones from this decade:

MYCIN (1972):

Developed at Stanford University, MYCIN was one of the earliest expert systems designed to diagnose bacterial infections and recommend antibiotics. It utilized a rule-based approach to mimic the decision-making abilities of human experts in medicine.

"Speech Recognition by Machine: A Review" by Raj Reddy (1976):

This paper summarized early work on speech recognition technology and its challenges, providing a comprehensive overview of the state of natural language processing (NLP) at that time. Reddy's work highlighted the potential for machines to understand human speech.

"The Lighthill Report" (1973):

Sir James Lighthill published a critical report assessing AI research in the UK, concluding that progress in AI had not met expectations. The report led to significant funding cuts for AI projects, marking the beginning of what is often referred to as the "AI winter."

XCON (eXpert CONfigurer) (1978):

Developed at Carnegie Mellon University, XCON was a rule-based expert system that assisted in configuring orders for Digital Equipment Corporation's VAX computers. It demonstrated practical applications of AI in business settings.

"A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence" (1956):

Although this proposal was made earlier, it continued to influence AI research throughout the 1970s by framing the goals of AI research and fostering collaboration among researchers.

Shakey the Robot (1966-1972):

Developed at Stanford Research Institute, Shakey was one of the first mobile robots capable of reasoning about its actions and navigating through its environment using sensors and a camera. It represented significant progress in robotics and AI.

"LISP: A Language for Artificial Intelligence"

During this period, LISP became widely adopted as a programming language for AI research due to its powerful features for symbolic computation and manipulation.

"Artificial Intelligence: A General Survey" by Allen Newell and Herbert A. Simon (1972):

This paper provided an overview of AI research efforts at that time, discussing various approaches, including problem-solving techniques and cognitive modeling.

"Heuristic Programming in Artificial Intelligence" by Allen Newell et al. (1971):

This work explored heuristic methods for problem-solving in AI, emphasizing the importance of using rules of thumb to navigate complex decision-making processes.

Development of Prolog:

Prolog emerged as a prominent programming language for AI during this decade, particularly for applications in natural language processing and expert systems due to its logical programming capabilities.

AI Reasearch from 1980 to 1990

Between 1980 and 1990, AI experienced rapid growth, marked by advancements in expert systems, neural networks, and increased funding for AI programs

The decade from 1980 to 1990 was a transformative period for artificial intelligence, characterized by both significant advancements and challenges. While expert systems gained popularity and neural network research saw a resurgence with backpropagation, the field also faced setbacks due to disillusionment following earlier hype. Despite these challenges, foundational work during this time laid the groundwork for future developments in AI that would emerge in the following decades, ultimately leading to the modern era of machine learning and deep learning technologies.

Here's an overview of key research papers and developments from this decade:

Expert Systems:

The 1980s saw a surge in the development and application of expert systems, which are AI programs that emulate the decision-making ability of a human expert. Notable systems included XCON (also known as R1): Developed by John McDermott at Digital Equipment Corporation (DEC), XCON was one of the first commercially successful expert systems used for configuring orders of computer systems.

"Backpropagation Applied to Handwritten Zip Code Recognition" (1989):

This paper by David Rumelhart, Geoffrey Hinton, and Ronald Williams introduced the backpropagation algorithm for training neural networks. This method allowed for more effective learning in multi-layer networks, significantly impacting the development of deep learning techniques.

Fifth Generation Computer Systems (FGCS):

Launched by the Japanese government in 1982, this initiative aimed to develop computers that could use logic programming and knowledge-based processing. Although it did not achieve its ambitious goals, it spurred research in AI and influenced global AI projects.

"Artificial Intelligence: A New Synthesis" by Nils J. Nilsson (1998):

Although published slightly after the decade in question, Nilsson's work during this period contributed significantly to AI's theoretical foundations and its applications across various domains.

"The Society of Mind" by Marvin Minsky:

Minsky's ideas during this period laid the groundwork for understanding how intelligence could be constructed from simpler processes. His work influenced both cognitive science and AI.

"Elephants Don't Play Chess" by Rodney Brooks (1990):

In this influential paper, Brooks critiqued traditional AI approaches based on symbolic reasoning and proposed a behavior-based approach to robotics. His work shifted focus toward creating robots that could interact with their environment more effectively.

AI Winter:

The late 1980s saw a decline in AI funding and interest due to unmet expectations from earlier hype surrounding expert systems and AI capabilities. The Lighthill Report had already set the stage for skepticism regarding AI's potential.

Development of LISP Machines:

Specialized hardware designed for running LISP programs became popular during this decade, facilitating the development of AI applications but also contributing to the financial challenges faced by many companies as costs rose.

Neural Network Research:

Interest in neural networks was revived with new algorithms and architectures being developed during this period, leading to significant advancements in machine learning research.

Commercial Applications:

The 1980s marked the beginning of commercial applications of AI technologies in various industries, including finance, healthcare, and manufacturing, as businesses began adopting expert systems for decision support.

AI Reasearch from 1990 to 2000

From 1990 to 2000, AI research saw many advancements, particularly in machine learning, neural networks, and natural language processing

The decade from 1990 to 2000 was pivotal for artificial intelligence, characterized by significant advancements in machine learning techniques, particularly neural networks and reinforcement learning. Key research papers and breakthroughs during this time not only advanced theoretical understanding but also led to practical applications that continue to influence AI development today. The emergence of powerful algorithms and systems set the stage for the rapid growth of AI technologies in the following decades.

Here's an overview of key research papers and developments from this decade:

"Learning Representations by Back-propagating Errors" by David Rumelhart, Geoffrey Hinton, and Ronald Williams (1986):

Although published slightly earlier, this paper laid the foundation for the resurgence of interest in neural networks during the 1990s. The backpropagation algorithm became a cornerstone for training multi-layer neural networks.

"Probabilistic Reasoning in Intelligent Systems" by Judea Pearl (1988):

This influential book established the framework for Bayesian networks, which became essential for reasoning under uncertainty in AI systems. It had a lasting impact on machine learning and AI applications.

"The Coming Technological Singularity" by Vernor Vinge (1993):

In this paper, Vinge predicted that technological advances would lead to superhuman intelligence within thirty years. His ideas spurred discussions about the implications of advanced AI and its potential impact on society.

"Neural Networks for Pattern Recognition" by Christopher Bishop (1995):

This book provided comprehensive coverage of neural network techniques for pattern recognition, contributing to the understanding and application of neural networks in various fields.

"A.L.I.C.E.: An Artificial Linguistic Internet Computer Entity" by Richard Wallace (1995):

Wallace developed A.L.I.C.E., a chatbot that utilized natural language processing to engage users in conversation. It was inspired by earlier work like ELIZA but incorporated more sophisticated techniques for understanding language.

|

|

Deep Blue vs. Garry Kasparov |

"Long Short-Term Memory" (LSTM) by Sepp Hochreiter and Jurgen Schmidhuber (1997):

This groundbreaking paper introduced LSTMs, a type of recurrent neural network designed to overcome issues with standard RNNs, such as vanishing gradients. LSTMs have since become a fundamental architecture in deep learning applications.

"Gradient-Based Learning Applied to Document Recognition" by Yann LeCun et al. (1998):

This paper presented a convolutional neural network (CNN) architecture that achieved state-of-the-art results in document recognition tasks, showcasing the power of deep learning approaches.

"The Emotionally Intelligent Agent: A New Paradigm for Human-Computer Interaction" by Rosalind Picard (1997):

Picard's work on affective computing explored how machines could recognize and respond to human emotions, opening new avenues for human-computer interaction research.

"Reinforcement Learning: An Introduction" by Richard S. Sutton and Andrew G. Barto (1998):

This foundational text provided an extensive overview of reinforcement learning techniques, which have become crucial for training intelligent agents capable of decision-making based on feedback from their environment.

"The Neural Network Zoo":

During this decade, various researchers contributed to the development of different types of neural networks beyond feedforward networks, including convolutional networks and recurrent networks, expanding the applicability of neural networks across diverse domains.

Advancements in Machine Translation:

The shift from rule-based approaches to statistical methods for machine translation began during this period, with significant contributions from IBM's research teams that laid the groundwork for modern translation systems.

AI Reasearch from 2000 to 2010

In the first decade of the century, AI research produced advances in machine learning, natural language processing, and computer vision

The decade from 2000 to 2010 was marked by key advancements in artificial intelligence, particularly with the rise of machine learning techniques and deep learning architectures. Important research papers laid the foundation for future developments in AI technologies that would lead to breakthroughs in various applications such as computer vision, natural language processing, and reinforcement learning. The innovations from this period set the stage for the rapid growth of AI that followed in subsequent years.

Here's an overview of key research papers and developments from this decade:

"A Few Useful Things to Know About Machine Learning" by Pedro Domingos (2012):

Although published slightly after the decade, this paper summarizes key insights and principles of machine learning that emerged during the 2000s, providing a comprehensive overview of the field.

"Learning Multiple Layers of Representation" by Geoffrey Hinton et al. (2006):

This paper discusses deep learning techniques and the importance of multi-layer neural networks. Hinton's work during this period helped lay the groundwork for the resurgence of neural networks in AI.

"ImageNet: A Large-Scale Hierarchical Image Database" by Fei-Fei Li et al. (2009):

This landmark paper introduced ImageNet, a large database of annotated images designed to support visual object recognition research. ImageNet became a critical resource for training deep learning models.

"Large-Scale Deep Unsupervised Learning using Graphics Processors" by Rajat Raina et al. (2009):

This paper highlighted the potential of using graphics processing units (GPUs) for deep learning, demonstrating how modern hardware could revolutionize unsupervised learning methods.

"Deep Learning" by Yoshua Bengio, Yann LeCun, and Geoffrey Hinton (2015):

Although published in 2015, this paper reflects on the developments in deep learning throughout the 2000s, summarizing key techniques and breakthroughs that shaped the field.

"Statistical Language Models Based on N-grams" by Frederick Jelinek (1997):

While published earlier, n-gram models gained popularity in the early 2000s for natural language processing tasks, influencing subsequent research in statistical language modeling.

"The Unreasonable Effectiveness of Data" by Alon Halevy et al. (2009):

This influential paper discussed how large amounts of data can lead to improved machine learning models and insights, emphasizing the importance of data-driven approaches in AI.

"Playing Atari with Deep Reinforcement Learning" by Volodymyr Mnih et al. (2013):

Although published slightly later, this research built on ideas developed in the previous decade regarding reinforcement learning and neural networks to achieve human-level performance in playing Atari games.

Development of Convolutional Neural Networks (CNNs):

During this period, CNNs gained traction for image recognition tasks, leading to significant improvements in accuracy for various computer vision applications.

Natural Language Processing Advances:

The introduction of statistical methods for machine translation and text analysis became prominent during this decade, with systems like Google Translate beginning to leverage these techniques.

DARPA Grand Challenge:

The annual competition for autonomous vehicles began in 2004 and continued through the decade, driving advancements in robotics and AI technologies related to navigation and perception.

Launch of the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) (2010):

This annual competition focused on image classification tasks using large datasets and played a crucial role in advancing computer vision research.

AI Reasearch from 2010 to 2020

Between 2010 and 2020, AI research experienced rapid growth and diversification across various fields, including machine learning, natural language processing, robotics, and healthcare

The decade from 2010 to 2020 was transformative for artificial intelligence, characterized by significant advancements in deep learning techniques and their applications across various fields. Key research papers laid the groundwork for modern AI technologies that continue to influence industry practices and academic research today. The focus on ethical considerations and real-world applications also highlights the growing recognition of AI's impact on society as it becomes increasingly integrated into daily life.

Here's an overview of key research papers and developments from this decade:

"Deep Learning" by Yann LeCun, Yoshua Bengio, and Geoffrey Hinton (2015):

This landmark paper provided a comprehensive overview of deep learning techniques, discussing their applications and implications for AI. It highlighted the success of deep learning in various domains such as computer vision and speech recognition.

"ImageNet Classification with Deep Convolutional Neural Networks" by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton (2012):

This paper introduced the AlexNet architecture, which won the ImageNet Large Scale Visual Recognition Challenge. It demonstrated the effectiveness of deep convolutional neural networks (CNNs) in image classification tasks.

"Playing Atari with Deep Reinforcement Learning" by Volodymyr Mnih et al. (2013):

This influential paper presented a deep reinforcement learning algorithm that combined deep learning with reinforcement learning techniques to achieve human-level performance in playing Atari games.

"Generative Adversarial Nets" by Ian Goodfellow et al. (2014):

This groundbreaking paper introduced Generative Adversarial Networks (GANs), a novel framework for training generative models through adversarial processes. GANs have since been widely used in image generation and other applications.

"Attention Is All You Need" by Ashish Vaswani et al. (2017):

This paper introduced the Transformer architecture, which relies on self-attention mechanisms to process sequential data. The Transformer model has become foundational for many natural language processing tasks, including machine translation.

"BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding" by Jacob Devlin et al. (2018):

BERT (Bidirectional Encoder Representations from Transformers) revolutionized natural language processing by introducing a method for pre-training language representations that can be fine-tuned for various tasks.

"Deep Reinforcement Learning from Human Preferences" by Paul F. Christiano et al. (2017):

This research explored how reinforcement learning can be improved by incorporating human feedback to guide the learning process, addressing challenges in training AI systems to align with human values.

AI in Healthcare:

Numerous studies emerged during this period focusing on AI applications in healthcare, such as using machine learning algorithms for disease diagnosis, treatment recommendations, and predicting patient outcomes.

"Artificial Intelligence--The Revolution Hasn't Happened Yet" by Michael Jordan (2018):

In this influential article, Jordan discussed the limitations of current AI technologies and emphasized the need for a broader understanding of intelligence beyond mere data-driven approaches.

AI Ethics:

The decade saw increasing attention to ethical considerations surrounding AI, with numerous papers addressing issues such as bias in algorithms, transparency, accountability, and the societal impacts of AI technologies.

AI in Education:

Research papers explored the integration of AI in educational settings, focusing on personalized learning experiences through intelligent tutoring systems and adaptive learning technologies.

COVID-19 Response:

The COVID-19 pandemic spurred research on using AI for tracking the spread of the virus, optimizing healthcare resources, and developing predictive models for outbreak management.

Current Research

Most Cited AI Papers

Doradolist provides a list of the 21 most cited machine learning papers, which are critical for understanding foundational concepts in AI. Some key papers include:

- "Deep Residual Learning for Image Recognition" by Kaiming He et al. (2016): This paper introduced residual networks (ResNets), significantly improving the training of deeper neural networks.

- "Generative Adversarial Nets" by Ian Goodfellow et al. (2014): A groundbreaking paper that proposed a framework for training generative models through adversarial processes, leading to advancements in image generation.

Recent Influential Papers

According to Zeta Alpha, the top cited AI papers from 2020 to 2022 illustrate the rapid evolution of AI technologies:

- "Training Language Models to Follow Instructions with Human Feedback" by OpenAI (2022): This paper discusses methods for improving language models to better understand and follow user instructions.

- "Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer" (T5) by Google: This study focuses on transfer learning techniques using transformer models, demonstrating their effectiveness across various NLP tasks.

Historical Papers

The GitHub repository "awesome-ai-papers" lists significant AI papers organized by publication date across various fields such as computer vision, natural language processing, and reinforcement learning. This resource serves as a comprehensive archive for researchers looking to explore influential works in AI.

Community Insights

Here are some other key documents that have made substantial impacts in recent years:

- "Attention Is All You Need": This paper introduced the transformer architecture, which has become foundational in NLP and beyond.

- "GPT-3: Language Models Are Few-Shot Learners": This work showcased the capabilities of large language models in performing tasks with minimal examples.

Links

Links

jmc.stanford.edu/articles/index.html

courses.cs.washington.edu/courses/csep590/06au/projects/history-ai.pdf

dl.acm.org/doi/abs/10.1155/2021/8812542

en.wikipedia.org/wiki/Artificial_intelligence_in_myths_and_legends

en.wikipedia.org/wiki/Timeline_of_artificial_intelligence

github.com/aimerou/awesome-ai-papers

onlinelibrary.wiley.com/doi/10.1155/2021/8812542

ourworldindata.org/grapher/annual-scholarly-publications-on-artificial-intelligence

oxford.shorthandstories.com/ai-a-history/index.html

people.idsia.ch/~juergen/deep-learning-miraculous-year-1990-1991-may2021.html

sites.lafayette.edu/ge/2022/10/23/artificial-intelligence-across-time-1990s-vs-2010s/

st.llnl.gov/news/look-back/birth-artificial-intelligence-ai-research

artfish.ai/p/ai-generated-research-papers

bighuman.com/blog/history-of-artificial-intelligence

coursera.org/articles/history-of-ai

doradolist.com/papers/21-most-cited-machine-learning-papers

forbes.com/sites/gilpress/2016/12/30/a-very-short-history-of-artificial-intelligence-ai/

freecodecamp.org/news/the-history-of-ai/

holloway.com/g/making-things-think/sections/the-ai-boom-19801987

kdnuggets.com/2018/02/resurgence-ai-1983-2010.html

klondike.ai/en/ai-history-the-innovations-of-the-90s-and-deep-blue/

reddit.com/r/MachineLearning/comments/16ij18f/d_the_ml_papers_that_rocked_our_world_20202023/

sciencedirect.com/science/article/pii/S0040162523002640

sciencedirect.com/science/article/pii/S0268401221000761

technologyreview.com/2023/04/11/1071104/ai-helping-historians-analyze-past/

zeta-alpha.com/post/must-read-the-100-most-cited-ai-papers-in-2022