AI Data Science

AI Data Science

Provides the data and preparatory work needed to train and fine-tune AI models

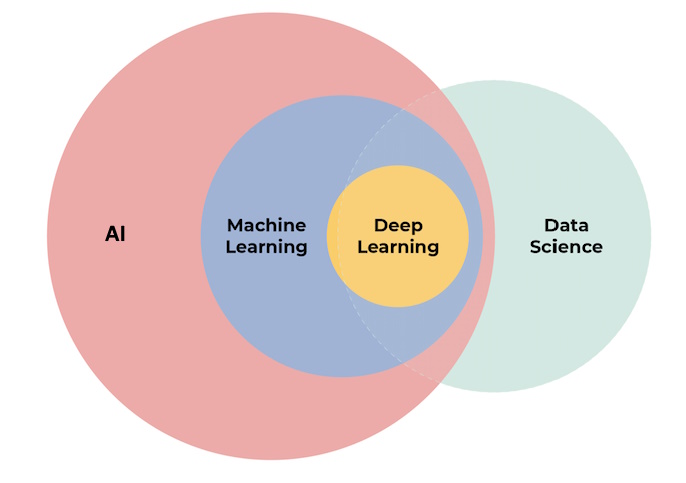

AI Data Science is an interdisciplinary field that merges AI with data science in order to extract insights and knowledge from structured and unstructured data using advanced computational techniques. As the reliance on data-driven decision-making advances, AI data science has emerged as a key component in fields such as healthcare, finance, and retail.

The rise of machine learning, a subset of AI focused on enabling machines to learn from data, has propelled AI data science to the forefront of technological advancements. Through methods such as supervised, unsupervised, and reinforcement learning, AI systems can autonomously analyze vast datasets to uncover patterns, predict outcomes, and automate processes.

The integration of AI and data science is not without its challenges. Ethical considerations, including algorithmic bias, data privacy, and the explainability of AI systems, have raised questions regarding the implications of these technologies. Stakeholders are increasingly advocating for responsible AI practices to ensure fairness and accountability in automated decision-making. As AI and data science continue to evolve, their applications are expected to expand further and influence the future landscape of technology and society.

History

History

Looking back to the early part of the 20th century, the history of AI has witnessed the rapid development of Machine Learning and Deep Learning, The decades from 1910 to 1950 saw significant advancements that established the foundations of machine learning:

-

In 1913, Andrey Markov introduced what would later be known as Markov chains, which became essential in various machine learning algorithms

-

Alan Turing's 1936 proposal of the theory of computation provided a theoretical basis for the development of computing machines.

-

The creation of the ENIAC in 1940, the first electronic general-purpose computer, marked a significant leap forward in computational technology

-

In 1943, Walter Pitts and Warren McCulloch published the first mathematical model of a neural network, signaling the beginning of research into neural networks, which are fundamental to many AI systems.

-

Donald Hebb's 1949 publication of "The Organization of Behavior" introduced concepts such as Hebbian learning, which are important for understanding the learning processes in neural networks.

Development and Challenges in AI

The journey of AI has not been linear; it has experienced breakthroughs as well as periods of stagnation referred to as "AI winters." The field of AI formally began gaining traction in the 1950s with the emergence of symbolic reasoning and rule-based systems. However, the limitations of these early systems led to disillusionment during the 1970s and 1980s.

Despite these challenges, AI continued to evolve, particularly with the introduction of machine learning as a distinct subfield. The ability of machines to learn from data and improve over time became a driving force behind advancements in AI applications. As computational power increased and large datasets became available, machine learning techniques, particularly those involving deep learning, gained prominence, leading to the current AI renaissance.

The Rise of Machine Learning and Data Science

In recent years, machine learning has emerged as a key component of AI with statistical techniques and algorithms to analyze and interpret complex datasets. Data science, which encompasses data collection, processing, analysis, and modeling, has further integrated with machine learning to enhance decision-making. By applying machine learning techniques, data science transforms vast amounts of data into actionable insights. Today, AI applications continue to grow, with machine learning and deep learning at the forefront.

Key Concepts

Key Concepts

AI and Machine Learning

AI is a transformative technology that encompasses various methodologies, including machine mearning. Machine mearning is a branch of AI focused on enabling systems to learn from data and improve over time without explicit programming. This autonomy is achieved through different approaches, such as supervised, unsupervised, semi-supervised, and reinforcement learning, each employing unique techniques for training algorithms and deriving insights from data.

⚡Supervised Learning

Supervised learning involves using labeled datasets to train algorithms. By analyzing historical data, these algorithms can identify patterns and make predictions about future outcomes. This method is critical in applications where historical context is essential for accuracy, such as in financial forecasting or medical diagnosis.

⚡Unsupervised Learning

In contrast, unsupervised learning does not rely on labeled data. Instead, it aims to discover hidden structures within datasets, helping systems to infer functions and relationships autonomously. This technique is often utilized in clustering tasks or anomaly detection, where predefined categories are unavailable.

⚡Semi-Supervised Learning

Semi-supervised learning combines elements of both supervised and unsupervised learning, making it particularly advantageous when labeled data is scarce and expensive to obtain. This approach leverages a small amount of labeled data alongside a larger pool of unlabeled data to enhance learning accuracy, proving useful in scenarios like medical diagnostics for rare diseases.

⚡Reinforcement Learning

Reinforcement learning focuses on training agents to make decisions by rewarding desired actions and punishing undesirable ones. This trial-and-error method enables agents to learn optimal strategies over time, which is especially applicable in robotics, gaming, and real-time decision-making environments.Common reinforcement learning algorithms include Q-Learning and Policy Gradient Methods. Both allow for dynamic learning in complex scenarios.

Reasoning in AI

The integration of reasoning capabilities marks a significant advancement in AI, enabling systems to engage in complex decision-making processes. Models like OpenAI's o1 and Google's Gemini 2.0 Flash Thinking Mode exemplify this progression, allowing users to interact with AI in a more human-like manner, facilitating nuanced understanding and step-by-step planning. This evolution enhances the ability of businesses to harness AI for actionable insights and strategic planning by positioning reasoning as a critical component of future AI applications.

Data Processing and Insights

The ability to effectively process and derive insights from data is key in the age of AI and machine language. Skills such as data understanding, processing, visualization, and communication will be in demand in the coming decades. Organizations should foster an environment where employees possess the necessary skills to apply AI technologies and understand the data science process.

Applications

Applications

Healthcare

In the healthcare sector, AI advancements have significantly improved diagnostic capabilities and patient care. Machine learning techniques in medical imaging enhance the accuracy of diagnoses, while advanced analytics tools provide insights for personalized treatment plans, thus reducing operational costs for healthcare providers. These innovations demonstrate the transformative potential of AI in delivering better healthcare outcomes and optimizing resource allocation.

Autonomous Systems

The development of autonomous systems, particularly in the automotive industry, showcases another application of AI. Companies like Cruise and Shield AI utilize machine learning and computer vision to enhance safety and efficiency of self-driving cars and drones, respectively. These systems are designed to operate in complex environments and continuously learn from massive data sets to improve their functionalities.

Financial Services

In the financial sector, AI and machine learning have transformed credit underwriting and risk management practices. Modern credit underwriting models incorporate alternative data, such as educational background and social networks, to complement traditional financial evaluation metrics. Generative AI plays a crucial role in risk management by processing both structured and unstructured data to identify potential issues in real-time to minimize losses. AI-driven forecasting models are able to predict risks, lending rates, and market movements more accurately than conventional linear models.

Morgan Stanley developed Debrief, an AI-driven platform to enhance its financial advisory services. By integrating extensive financial data with individual client profiles, the platform delivers customized investment recommendations, allowing financial advisors to offer more personalized guidance to their clients.

Retail and E-commerce

Retailers use AI technologies in many areas to enhance customer experience and improve operational efficiency. For example, Teikametrics' Flywheel 2.0 platform optimizes advertisement campaigns and automates search engine optimization. Similarly, Trendalytics utilizes AI to gather retail industry insights from social media and Google trends to help retailers identify product trends and competitor pricing strategies. Companies employ AI to provide personalized product recommendations, increasing user engagement and conversion rates. Product discovery in the fashion and beauty industries are made possible with advanced computer vision and natural language processing technologies.

Transportation

Airlines have implemented data integration techniques to coordinate information across different departments and provide a holistic view essential for effective management. By utilizing data science methodologies, these companies can optimize flight schedules, manage resources efficiently, and improve customer service.

Customer Engagement

AI has also been integrated into customer engagement strategies. For example, speech analytics and real-time feedback to improve customer call efficiency; analysis of consumer data to generate predictive insights that enable brands to craft targeted messaging campaigns; and automated call summaries and customer profile updates for contact center operations.

Development Tools

AI is also revolutionizing the software development process. Tools such as AI-enhanced continuous integration and continuous deployment (CI/CD) pipelines automate software deployment and monitoring to reduce downtime and deployment failures. Low-code and no-code platforms enable individuals with minimal coding experience to create advanced applications.

AI

Data Science Careers

AI

Data Science Careers

Explore our careers in AI page for more. In data science, some of the key roles for data professionals are:

Data Scientist

A data scientist is responsible for the collection, analysis, and visualization of data. A data scientist is also expected to build effective models for extracting the right information from the data they collect. Depending on the company, a data scientist may work alone and report directly to the CEO, or they may work in a team and report to a team leader or head of business intelligence.

Data Engineer

A data engineer is a set of data science professionals whose work focuses more on the technical side of things. They're responsible for working with and extracting raw data, making sure it's accessible to decision-makers in the company, and that the data is collected and stored in an appropriate manner. This role is often a promotion from an initial data scientist role, as it requires more experience.

Data Analyst

A data analyst is expected to work mostly on data analysis. Data analysis requires the cleaning, interpreting, and visualizing data by using the correct set of data visualization tools. The role is similar to that of a data scientist, but the professional focus is slightly different.

Big Data Engineer

Big data engineers, like data engineers, work on extracting raw data; the difference being the amounts and volume of the data sets they use. Becoming a big data engineer usually requires several years of experience and well-rounded technical expertise.

Tools and Technologies

Tools and Technologies

AI and data science are connected with a variety of tools and technologies that cater to different aspects of data analysis, machine learning, and productivity. These tools enable professionals to improve their workflows, automate tasks, and derive insights from complex datasets.

Programming Languages

Python remains the leading programming language in the field of data science due to its ease of use and the breadth of available libraries for data analysis and machine learning. Its dominance is expected to continue as new data professionals prioritize learning Python. Other relevant programming languages include R, Java, SQL, and Scala, each serving specific purposes in data analysis and machine learning applications.

AI-Powered Platforms

One of the prominent trends in data science is the integration of AI-powered platforms that allow easier access to advanced technology. For instance, Workspace AI offers features which enhance productivity for data scientists by providing intelligent suggestions and explanations for code errors. IBM Watson, now the watsonx portfolio, is an AI platform that supports a wide array of tools and services for natural language processing, image recognition, and data analysis. It is designed to help businesses analyze unstructured data and make data-driven decisions.

Machine Learning Libraries

Machine learning uses libraries like Scikit Learn and PyTorch. Scikit Learn is renowned for its versatility, supporting numerous supervised and unsupervised learning algorithms. Scikit is popular among data scientists for tasks ranging from decision trees to regression analysis. Focusing on deep learning, PyTorch offers a dynamic framework for building and experimenting with neural networks, which facilitates efficient development and training of models.

Automation and Productivity Tools

Automation tools like AutoML are useful for simplifying model selection and hyperparameter tuning, allowing data professionals to optimize their machine learning workflows without extensive manual input. Platforms like ClickUp AI enhance productivity by integrating AI capabilities into project management to streamline organizational processes.

Data Visualization

Emerging trends in data visualization are shaping the tools used in data science. The shift towards using video for data visualization has shown to enhance viewer engagement and is a valuable technique for translating complex data into formats acceptable for decision-makers.

Challenges

of AI Data Science

Challenges

of AI Data Science

The integration of Data Science and AI presents significant challenges that must be addressed to harness the full potential of these transformative technologies. Key areas of concern include technical difficulties, ethical considerations, and the need for effective stakeholder engagement.

Technical Challenges

One of the primary technical challenges in AI and Data Science is the quality and quantity of data. The effectiveness of AI models relies heavily on having accurate, comprehensive, and representative data for training purposes. Poor data quality can lead to ineffective algorithms and biased outcomes. To ensure that statistical patterns and relationships remain valid over time as AI applications become more complex, there is a need for effective data management processes, including data quality control, audits, and regular updates.

Ethical Considerations

As AI technologies expand, it is important for data scientists and AI practitioners to consider the societal impacts of their work. Ethical considerations should be integrated into every stage of the design, development, and deployment processes of AI technologies. Translating concepts of fairness and causality into mathematical formulations that AI systems can utilize is a big hurdle for researchers and practitioners. Furthermore, regulatory frameworks often do not provide methods for achieving fair AI, which requires a proactive approach to algorithm design.

Future Trends

Future Trends

As we advance further into the 21st century, the fields of AI and data science are expected to expand rapidly. Several key trends are projected to shape the landscape in the future. Some of these are:

Natural Language Processing

Natural language processing technology has seen significant progress and is anticipated to continue its upward trajectory. New models and frameworks are being developed to enhance machines' abilities to comprehend, interpret, and generate human language with greater accuracy and effectiveness.

Explainable AI

As AI systems become increasingly sophisticated, the need for transparency and interpretability grows. Explainable AI (XAI) encompasses methods that allow users to understand how algorithms derive their conclusions. This trend is important for fostering trust among users and ensuring the ethical deployment of AI technologies.

Data Democratization

The movement towards data democratization is gaining momentum, allowing workforces beyond just data scientists to utilize analytics in their daily tasks. This trend helps working environments where intelligent insights are made accessible to more employees in order to enhance productivity and decentralize decision-making.

Autonomous Customers

By 2028, an estimated 15 billion connected products are projected to act as autonomous customers. This development signifies a corresponding shift towards more automated business processes, which could account for a larger portion of corporate revenue in the coming years.

AI in Retail

AI is increasingly being integrated into retail operations, enhancing demand forecasting through the analysis of historical sales data and market trends. This application contributes to more efficient supply chains and helps retailers optimize inventory management and reduce waste.

Cloud-Based Operations

As businesses increasingly migrate to cloud platforms, data science and AI are expected to thrive in cloud-based environments. This shift not only enhances data accessibility and collaboration, but also supports the deployment of advanced analytical tools and techniques.

Ethical AI

With the growing adoption of AI technologies, there is an increased emphasis on the ethical implications of AI applications. Addressing algorithmic bias and ensuring fairness in AI systems is becoming a focus of attention.

Links

Links

iu.org/blog/study-guides/ai-vs-data-science

institutedata.com/blog/relationship-between-data-science-and-ai

institutedata.com/blog/how-data-science-and-ai-are-connected

aws.amazon.com/compare/the-difference-between-data-science-and-ai

guvi.io/blog/data-science-and-artificial-intelligence

snowflake.com/guides/artificial-intelligence-and-data-science